System Information

| Field | Value |

|---|---|

| Operating System | Linux - Debian GNU/Linux 13 on x86_64 |

| Product | AMP ‘Phobos’ v2.6.5.2 (Mainline) |

| Virtualization | Docker |

| Application | Satisfactory |

| Module | GenericModule |

| Running in Container | Yes |

| Current State | Ready |

Problem Description

Issue

Since one of the last AMP updates, my Satisfactory server would not take a backup and start up again, after its nightly sheduled backup.

I was finally able, to pin point it towards the “dirty only” flag within the backup shedule, if it is enabled, the server seems to crash, during backup.

LogModuleManager: Shutting down and abandoning module ComputeFramework (78)

LogModuleManager: Shutting down and abandoning module ChunkDownloader (76)

LogModuleManager: Shutting down and abandoning module LauncherChunkInstaller (74)

LogModuleManager: Shutting down and abandoning module OnlineSubsystem (72)

LogModuleManager: Shutting down and abandoning module HTTP (66)

LogModuleManager: Shutting down and abandoning module SSL (65)

LogModuleManager: Shutting down and abandoning module OnlineSubsystemUtils (61)

LogModuleManager: Shutting down and abandoning module OnlineServicesCommonEngineUtils (59)

LogModuleManager: Shutting down and abandoning module OnlineServicesCommon (57)

LogModuleManager: Shutting down and abandoning module OnlineServicesNull (55)

LogModuleManager: Shutting down and abandoning module OnlineServicesInterface (54)

LogModuleManager: Shutting down and abandoning module NiagaraVertexFactories (51)

LogModuleManager: Shutting down and abandoning module NiagaraShader (49)

LogModuleManager: Shutting down and abandoning module ChaosCloth (47)

LogModuleManager: Shutting down and abandoning module VariantManagerContent (45)

LogModuleManager: Shutting down and abandoning module GLTFExporter (43)

LogModuleManager: Shutting down and abandoning module DatasmithContent (41)

LogModuleManager: Shutting down and abandoning module LensDistortion (39)

LogModuleManager: Shutting down and abandoning module OptimusSettings (37)

LogModuleManager: Shutting down and abandoning module ACLPlugin (35)

LogModuleManager: Shutting down and abandoning module AISupportModule (33)

LogModuleManager: Shutting down and abandoning module SteamDeckConfig (31)

LogModuleManager: Shutting down and abandoning module PythonScriptPluginPreload (29)

LogModuleManager: Shutting down and abandoning module PlatformCryptoOpenSSL (27)

LogModuleManager: Shutting down and abandoning module PlatformCryptoTypes (25)

LogModuleManager: Shutting down and abandoning module PlatformCrypto (23)

LogModuleManager: Shutting down and abandoning module IoStoreOnDemand (21)

LogModuleManager: Shutting down and abandoning module RenderCore (19)

LogModuleManager: Shutting down and abandoning module Landscape (16)

LogModuleManager: Shutting down and abandoning module AnimGraphRuntime (14)

LogModuleManager: Shutting down and abandoning module Renderer (12)

LogModuleManager: Shutting down and abandoning module Engine (10)

LogModuleManager: Shutting down and abandoning module CoreUObject (8)

LogModuleManager: Shutting down and abandoning module SandboxFile (6)

LogModuleManager: Shutting down and abandoning module PakFile (4)

LogPakFile: Destroying PakPlatformFile

LogModuleManager: Shutting down and abandoning module RSA (3)

LogExit: Exiting.

LogCore: FUnixPlatformMisc::RequestExit(bForce=false, ReturnCode=130)

Exiting abnormally (error code: 130)

Reproduction Steps

- Setup Backup shedule “Shut the server down, take a backup, and start it up again.”

- Select “local” and “dirty only”

- Wait for the backup to start and look in the console

Edit2: The Error “Exiting abnormally (error code: 130)” seems to have nothing to do with the “dirty only” flag, as it is also shown, when doing a normal shutdown and backup

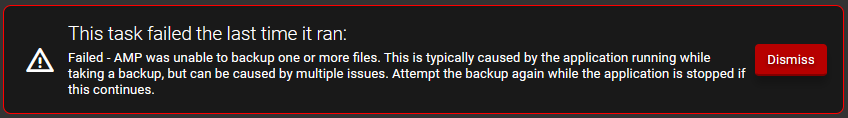

Edit: Btw, the normal backup schedule never worked with Satisfactory for me. It always issued this message. It wasn’t really a big problem, as I was able to get it running with the “Shut the server down, take a backup, and start it up again.” But if I now have a ticket already open, I wanted to adress this as well and ask if this is already known or if it could be an issue on my end.

But also not that big of a deal, as it worked without any issue (unless “dirty only” is selected)